In systems neuroscience, a lot of times we need to build or modify mechanical support structures to keep devices in place. This could be cameras, electrode manipulators, slides, lenses, headposts, etc. Almost universally, these structures need to keep relatively light devices or preparations at precise positions, this means they should not drift, vibrate, or deflect.

This is an extremely large topic and I am only going to cover some basic but concrete points here with the goal of clearing up some common misconceptions and providing some basis for making better design or purchasing decisions. Keep in mind that in a lot of cases it may be advisable to pay an expert to help design your rig or to look for existing solutions. Your time is expensive, and spending it to build something that you could buy is often a bad trade-off.

A lot of the more fundamental points in this post are made better by Dan Gelbart in his excellent video series ‘Building Prototypes’. If you have the time, watch these.

Stiffness vs. yield strength

People often spend their efforts in the wrong place when building instruments because they think that in order to reduce drift or vibration, the things they build need to just be ‘as strong as possible’, which means that the best structure would be the one that can hold the most weight. This is usually a misconception because what matters when building instruments is stiffness at low stress/strain, not strength.

The above figure shows a strain (deformation) vs. stress diagram for some material. The terms are a bit unintuitive, but in the context of thinking about the stiffness of a structure, this translates to the relationship between an applied force (stress, y-axis) in something like newtons (handwavy: 1 N ∼100 g) and the resulting deformation (strain, x-axis) in mm or μm. There are two major properties of any structure to consider:

- The yield strength (red region): This is the stress/strain at which the structure begins to permanently deform and fail. This point is important for building airplanes but almost completely irrelevant for building instruments.

- The elastic stiffness (black region): Think of stiffness as the linear deflection per unit of force applied, even for small forces where the structure springs back as soon as the force is removed. This is the metric that matters for us when building things in the lab because it governs how much (and what what frequency) structures vibrate, or move in practical use when you’re not leaning on them.

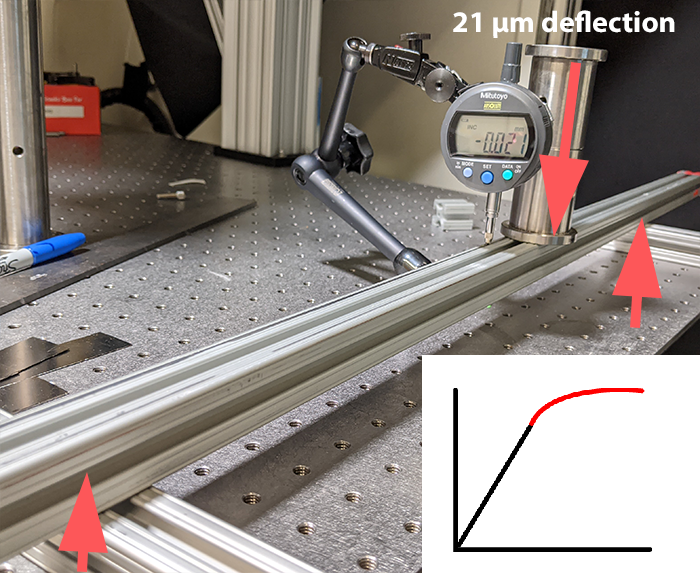

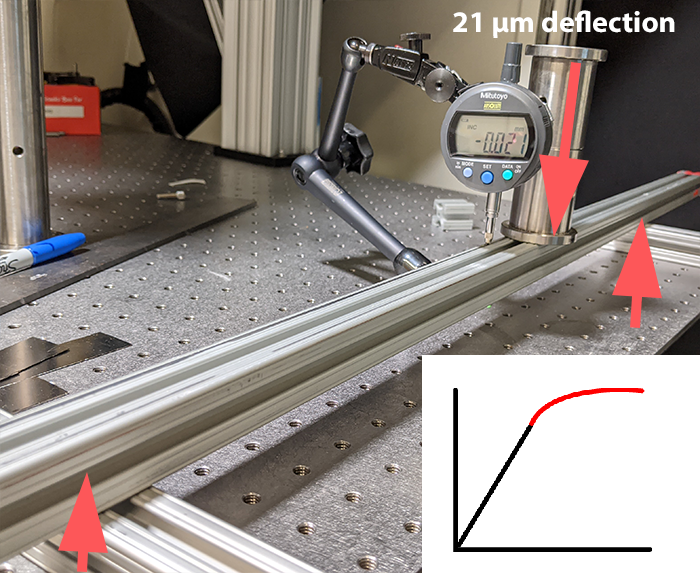

What you usually want is to have a steep slope / high stiffness, regardless of what happens in the red region, because we’ll never get there. To really make this point clear, here’s an example, stolen straight from Dan Gelbart’s videos. I’m measuring the deflection of a single 80/20 rail, supported on both ends, with a weight of about 1kg or so placed in the middle.

This beam is pretty strong, certainly impossible (for me) to break by hand, and we’re getting a deflection of around 20 micron.

Now instead of the single uninterrupted beam, lets put two beams, only held together with rubber bands. I can push down on this with my hand and break it pretty easily.

However, we get only about half the deflection from the weight, because even though the yield strength of this rubber banded beam is quite pathetic, as long as the two pieces keep touching, its stiffness is the same as a single piece of twice the thickness. This means that the slope in the elastic linear bottom portion of the strain vs stress curve is higher, and the beam is stiffer. This means that this insane looking rubber band contraption would really be twice as good at holding up a microscope slide than the ‘stronger’ uninterrupted beam, as long as the rubber bands stay in place.

Some other examples of the same principle:

- Attaching a piece of equipment to a structure with a spring loaded clamp is as stable as using a bunch of M6 bolts (until you bump it with an elbow of course).

- ‘Properly’ tightening screws the way you would on a bike or car is almost always unnecessary.

- Using adhesives, even on questionably suitable surfaces such as smooth metal, is often equivalent to screws (not in terms of serviceability of course, and only is the glue film is very thin, and stiff).

- For an optical post base, or anything screwed into a breadboard, if the base is flat and in contact with the table already, adding more screws/clamps is not going to do anything. This is why clamping forks work.

It is important to consider where stiffness is needed and where not. In the following, we will assume that stability under light loads is important, as for example in an electrophysiology setup or a microscope. In many applications, this is not the case and e.g. a camera for rough behavior tracking, mounted on a plastic bracket held in place with epoxy is perfectly functional even if the plastic deforms a bit if you move the camera’s usb cable.

Everything deflects and vibrates

As the above example shows, even a pretty light weight is enough to bend a 1″ rail enough to destroy any recording or optical measurement we encounter in neuroscience. Different applications have vastly different tolerances, but in almost all cases it is useful to remember that anything, whether it’s a piece of paper or a thick bar of steel, stereotax arms, manipulators, your microscope, tables, the floor in your room, etc. all bend in the same way as soon as any force is applied. The only question is by how much. This is why air tables have to be extremely thick to stay flat. The trick is to have things not bend too much, by putting material where it best resists the deformation that would cause issues.

An important baseline assumption to keep in mind here is that rooms, tables (even optical tables) are always moving / vibrating a little bit. This means that even in the absence of explicit forces, structures like to move around at their resonant frequency, and it is therefore often important to make stiff structures even if you do not expect dynamic loads. This mostly matters for microscopes and for electrophysiology.

Material matters, but not as much as you might think

The stiffness of materials (the slope of stress vs. strain in the above diagram) is termed Young’s modulus. Forgetting about the exact definition of the units, here’s some approximate examples to give us a sense of relative scale (all in GPa):

Acrylic/Epoxy: 3

Wood: 5-10

Aluminum: 70 (Easy to work with, cheap, does not corrode easily)

Titanium: 100 (The downsides of aluminum and of steel, at an even higher price)

Steel: 200 (Hard to work with, expensive, corrodes unless you get even more expensive and harder to work with stainless)

Practically, this means that a structure made from steel is about twice as stiff as the same one made from aluminum. However, as we’ll see, changes in the mechanical design of parts can easily yield changes of an order of magnitude and can easily offset this disadvantage.

Compared to metals, plastic is going to deform like crazy under load. Properly designed plastic parts can of course achieve precision, and 3d printing is nice, but largely plastics are in a huge disadvantage.

Aluminum is your friend

Aluminum, while half as stiff as steel, is also about half as heavy, and usually less than half as expensive to build with. This means that you can compensate by making an aluminum structure twice as thick/wide/deep as a steel one for the same weight, save money, and get a higher performance end result. The structural frames in some of the highest precision CNC machines in the world are made from aluminum, so it’s likely good enough for us as well.

The other incredible upside of aluminum is that it can be modified quite easily with hand tools in a lab setting. Working with aluminum is almost as as easy as working with wood, plus you can cut threads. This can help you solve problems extremely quickly and cheaply if you have a place to put a vise and you’re comfortable making some metal chips:

- Get a decent hacksaw, and a file. You can now cut construction rails to length, or quickly cut basic shapes out of aluminum sheets.

- Get a cheap drill press, or a hand drill, and a decent set of drill bits (a spotting bit which does not wander around when starting holes is nice to have, as well as chamfer bits to make the edges of holes nicer). Drilling holes in aluminum is easy and fast.

You can now make parts that should solve at least half of your basic construction needs in the lab setting so fast that if can sometimes outperform buying them.

Bracket made from extremely cheap aluminum stock, entirely by hand in <10 minutes. Works as well as an expensive commercial one, which would have taken similarly long to fill in an order for. Fun side question: why are the 2 bolts enough?

3. Get an M6 and M3 tap and a handle (here for example) and learn how to tap holes. You can now make parts that attach to each other without using nuts, and parts that can act as ‘breadboards’ to mount other stuff to. Use M6 wherever you can (these are easy to tap and hard to break), and M3 for small stuff (careful with the tap though, these snap easily).

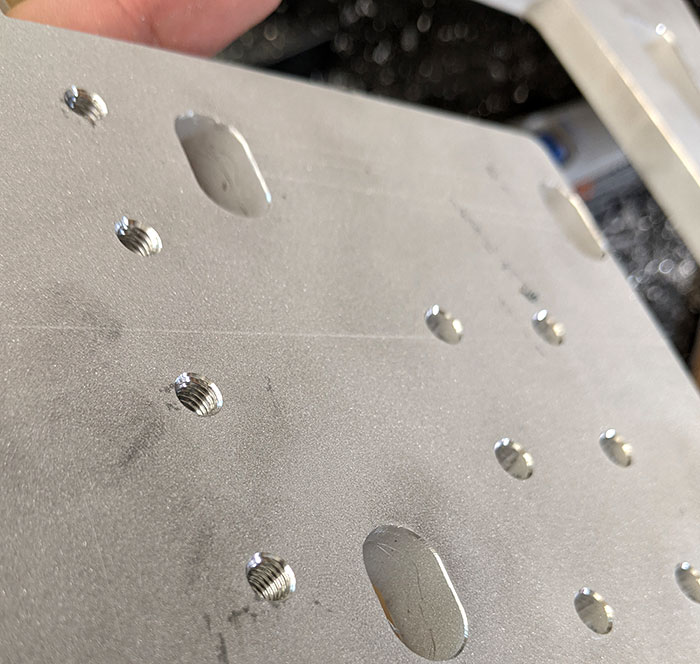

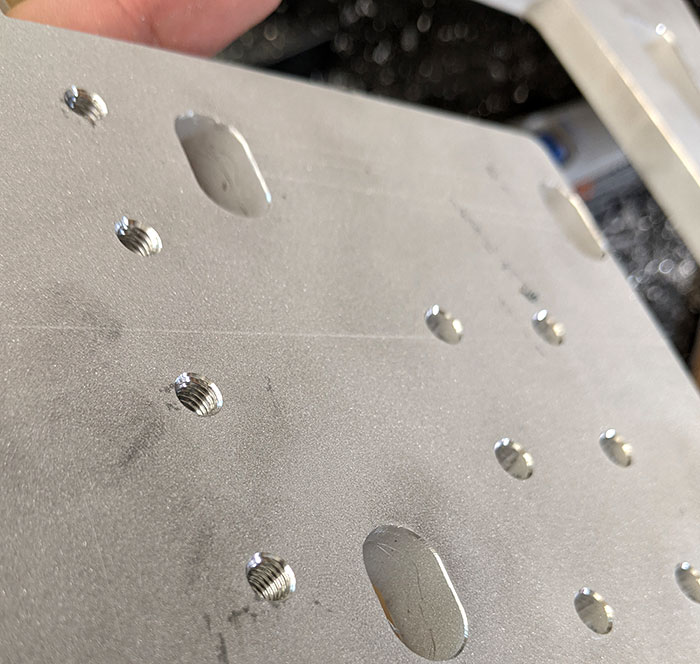

Tapped M6 holes in a waterjet cut piece of aluminum make extremely versatile parts.

Use 80/20 rails (or equivalent)

80/20 rail (generic name is ‘T-slotted construction rail’) is just amazing. These are extruded aluminum beams with a profile that allow the addition of screws anywhere along their length with the help of special captive T-nuts. Using 80/20 is a whole topic, and its useful to look at some examples to get a sense of what can be done.

You can quickly make enclosures, tables, microscope stands, optical setups, cable management out of this stuff. It’s kind of cheap, and fast. If you keep a few lengths around, plus some brackets and screws, a hacksaw and a drill, you can fix issues that come up in your rig in very short order.

Pro tips:

- At least in the US, 1-2 inch based rail is cheapest, and works just fine with M6 based T-nuts. If you mix 1/4-20 and M6 screws it is a great idea to make it a rule that there can, for example, only be black 1/4-20 nuts and silver M6 ones in your workspace.

- As outlined above, you can make brackets to connect multiple of these rails out of 1/4″ aluminum stock with a drill, or buy commercial ones. Often, using flat 1/4″ plates is more versatile than using expensive 90degree inside braces.

- Use the fact that the T-nuts can be repositioned freely to your advantage, for example think about what dimension might need to change later and align the brackets accordingly.

- The central opening (there’s one in the single-width ones, 2 or 4 in larger ones) in these extrusions is just about perfect to cut an M6 thread in, allowing us to attach adapter brackets or whatever at the end of rails.

- If your structure closes off both ends of a rail so you can’t later add captive nuts, it can be a good idea to leave an extra one or two sitting in there so if you need to attach something later you can use them, or have a few (more expensive) drop-in nuts at hand for such cases.

Geometry matters

Because we are often building things from beams, straight lines and triangles are good, and any other shapes are usually bad.

(Source: sparkfun)

As a simple example, here is a simulation of a 1.5m high Thorlabs 95mm beam, mounted on a base, with a 10N side load. We get 71 μm of deflection. Adding a simple brace, made from a piece of 1″ 80/20 rail and a bracket cuts this down to 1 μm. When in doubt about the stability of something, try adding braces that directly lead from the load to a fixed point. If you used 80/20 rail this should be extremely easy.

Waterjet cutting gets you extremely far

If you get to a point where 80/20 does not fit, waterjet cutting is a quite economical way to make one-off shapes out of aluminum (or other material) sheets. It it a great way to make complicated custom pieces from metal at a fraction of the cost or complication of CNC machining. Again, there’s a dedicated Dan Gelbart video about this. I like to order from big blue saw in the US because they quote immediately and stock a lot of material thicknesses, but just about any vendor should do.

As an unrealistic toy example, imagine you need to hold something 30cm above an optical table and have it be stable under some side load, let’s say a micromanipulator that is not allowed to vibrate. Let’s compare a Thorlabs 1.5″ steel post (~$100, 1-2 days wait time) and a waterjet cut (0.5″ thick) aluminum plate (~$150, 30 min design time, 1 week wait time), mounting holes, a pair of 90 degree brackets for mounting on table, etc. not shown here:

With a 10N load, the 1.5″ steel post deflects by 40 μm, the aluminium plate by 0.2 μm. That’s 200x stiffer, plus you can put whatever custom threaded holes etc. for basically the same price, at the cost of being a less modular solution. This is of course an unfair comparison because of the advantageous geometry of the custom plate (and the lead time is higher), but that is exactly why waterjet cut parts are great: you can easily get the geometry that works best.

Waterjet cutting also allows you to very easily add through- or tapped holes at specified locations: Just order the part with undersized ‘pilot holes’ cut with the waterjet and then finish (and optionally tap) them by hand.

Custom designed breadboard for a microscope made from 0.5″ aluminum, straight from the cutter. All the pilot holes were waterjet cut to a bit under 5 mm diameter (they come out of the waterjet cutter tapered and uneven), and will be hand-drilled to either an M6 clearance (6.5 mm should do) or tapped to a M6 thread (drilled to ~5.25 mm and then tapped).

Should you?: In building (mostly one-time use) scientific experiments, the most expensive line item is often your time. If you can solve the problem by spending a few extra bucks on thorlabs or other commercial-off-the-shelf parts, or screw together some ugly 80/20 and get going with your experiment, do that. Making custom waterjet or CNC parts, or messing around with 3d printing, is for cases where you need to go beyond existing solutions, and for those cases only.

How to: Start by making your part drawing, and export it as DXF file. Almost any software will do, but it helps if you can enter measurements, and proper CAD tools like any of the autodesk offerings, or solidworks are ideal, but you can get pretty far using illustrator or similar tools. Measure twice, order once, and when in doubt, add a few extra pilot holes in places where you might need to attach something. When you get the part, fix the mistakes you made in your initial drawing with your hacksaw and file.

Keep in mind that waterjet cut pieces will not be guaranteed to be flat to amazing tolerances, and the cut edges will all be tapered from the water jet expanding towards the bottom. If you need the part to be flat, you might need to buy a proper breadboard, or get your plate surface ground at a machine shop. However, for many lab applications, flatness is pretty irrelevant because components of the experiment will usually be individually positioned with regard to each other or some other reference.